David Stairs

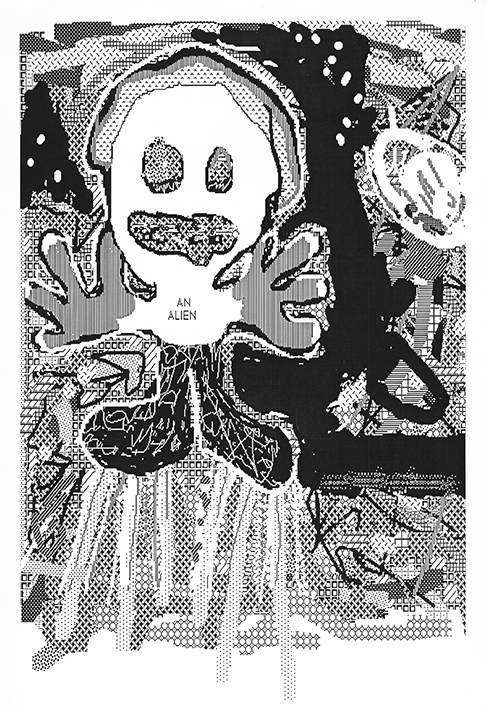

Courtesy of Lucien Stairs

You don’t have to look very far these days to see designers talking about the brave new world of Design AI. Helen Armstrong is out stumping her AI monograph, Big Data, Big Design. Mariana Amatullo is referencing it in the summer 2019 issue of Dialectic. And designers everywhere have become addicted to the Cloud, those banks of energy gulping servers housed in over-cooled desert complexes by Alphabet and Amazon. But what does AI really mean to the future of design?

We already have programs that, like Microsoft Outlook, supposedly anticipate our every move. Except Outlook merely stumbles, creates spacing errors and jettisons one onto other screens when it guesses wrong, which seems to be about 30% of the time. But don’t worry! AI is self-correcting! Which means that after it messes your work up for a couple years, maybe it will outsource your job.

The dumbing down of design has been underway for several years now. We first saw it with the introduction of CSS, which was supposed to streamline web design, and make sites more efficient. Publishers use CMS algorithms to “manage content.” Often this means bland, unreadable typesetting of 55 pica lines with uninspired titling and captioning, formatted to be quick and dirty. It is especially disheartening when design publishers, like Bloomsbury, resort to such methods.

The general use of AI, in government, business, or the military, is meant to crush reams of data to come up with optimized bureaucracy, purchasing, or logistics. The purpose when applied to design seems to be to improve the corporate bottom line by reducing the number of mistake-prone humans in the workplace. If you think a supercomputer or server bank sorting through the history of logos and marques to meet a quick deadline turnaround sounds like something out of Philip K. Dick, please note that machine-generated logo design is already here.

I’m not trying to romanticize creativity. The human potential movement has already accomplished that by telling every school kid that she/he is a winner. While I agree that most humans who are born healthy probably have the same quantum of inherent creativity, the long and winding road to realizing that potential is fraught with social roadblocks, economic setbacks, and character-building challenges that don’t have much to do with data sets— unless you define life experience as a data set.

A quick survey of internet sites relating to intelligent design and augmented reality yields a variety of material, from engineering white papers to sponsored sites. On one such site, the MIT Technological Review, Mike Haley of Autodesk argues for a “symbiotic relationship” between humans and computers in an effort to design more sustainable buildings. The MIT xPRO program in collaboration with Emeritus sells an 8-week course for tech professionals to “explore various design processes involved in AI-based products.” Both of these initiatives are examples of heavy academic/industry collaboration.

Lulu and other CMS publishing platforms, like Squarespace, Readymag, or The Grid offer templates, pattern libraries, and generators that will select the “best” layout. Some algorithms attempt “to observe how the great designers work.” Guardian Headliner will “highlight eyes in a photo to emphasize emotion” while Vincent “transforms rough sketches into a painting from Van Gogh.” There does not seem to be much concern about originality in any of this. In fact, the one complaint I read among several articles and sites was how the leveling power of AI simply made everything look the same.

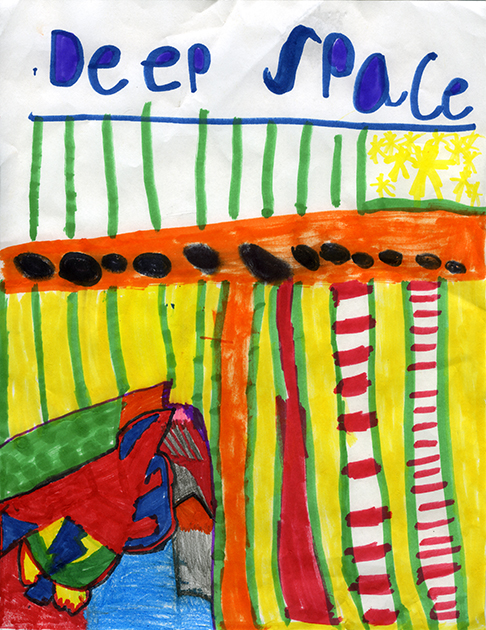

Master of Space Gravity by David Stairs after an original idea by Chris Stairs

Looking the same may be the goal of autocrats and students. Hitler and Stalin come to mind in the autocrat category. Their method of brokering uniformity was to eliminate all rival ideas. As for students, many of mine are enamored of using design presentation templates to demonstrate finished concepts to clients. Their work definitely looks spiffy as a result, but also unnaturally polished in a way many of them would be otherwise incapable of, yet similar to everyone else utilizing such templates.

The examples of AI wins by AlphaGo over Lee Sedol in Go, or Watson over Ken Jennings in Jeopardy, are so much less impressive when one realizes that it required teams of programmers and enormous computing power enlisting the combined resources of all known variations and facts just to beat puny inefficient individual humans, a rather heavy-handed victory of metrics over intuition.

I’ve already complained about the tribulations of anticipatory design in Microsoft Outlook. The greater problem is that people are being handed another bait-and-switch— convenience for data. Anticipatory design requires personal information, reams of it. But is convenience valuable enough to cede irretrievable personal information to large corporations, especially in light of what we have seen from the likes of Facebook and Google?

Finally, there are many instances of what has come to be known as Machine Co-Creativity, and some of the algorithms being used to promote it. Microsoft has one called Animation Autocomplete, which completes illustrations. Another example available in more than one online presentation is the drone body designed by an intuitive generative algorithm. The specifications call for a drone frame structure light enough to carry a certain payload using four propellers and their battery pack. The program then filters through hundreds of forms, adding and stripping away material until it arrives at an optimal shape, which happens to look a lot like the skeleton of a flying squirrel.

This last example seems self-prophesying, sort of like saying Nature knows best, or slow and steady wins the race. Evolution has worked well on Earth for a few billion years using this very method, but there’s nothing slow or steady about an AI algorithm, which is the potential problem. For the better part of a century we have been worried about mechanical men coming to take away our freedom. And since movies like Colossus: The Forbin Project in 1970, followed by Skynet in the Terminator series, and The Matrix, it’s been programming that has given us the heebie-jeebies, as well it should.

In Against Creativity Oli Mould critiques efforts by corporations and municipalities to co-opt creativity for their own benefit. About AI he writes, “…rather than continuing to build complex autonomous systems that draw humans ever deeper into relationships with code, why not create systems with an ‘exit strategy’ in place? In other words, we need to make sure that these technological augmentations to our lives are easily removed. We need to make sure we can detach ourselves from them as easily as we can plug ourselves in.”

The acknowledged truth that ethics is simply too slow to keep up with technology does not leave us room for error. Given the many reasons to be wary, those rushing to jump on the design AI bandwagon are either being callow or incompletely honest when they say it is for the betterment of humanity, unless, of course one is talking about the millions of clones that can be cranked out by random face generators. I, for one, don’t consider deep fakes part of the human family.

We’ll have to leave that call up to The Master of Space Gravity.

David Stairs is the founding editor of the Design-Altruism-Project.